Big Data and Document-Generation Process Applications

The first time I heard the term “big data,” I was at a Gartner summit on BPM. John Mahoney, a VP and distinguished analyst, was talking about a world not too far in the future where everything—cars, parking meters, bread makers, diapers . . . pretty much everything—would be equipped with wireless cards and IP addresses. The net result would be, to say the least, “big data” being generated and uploaded into the cloud. Everything from traffic flows to information on soiled undergarments would be routed into processing farms for any number of analytic reasons.

Not long after that, I was at an LSC conference listening to Todd Park, Chief Technology Officer for the United States, who was defining a different type of “big data”: the mountains of information gathered over the course of decades by the various government agencies—rainfall totals, corn crops, birth rates, and so on. While the various government agencies may have had no real end in mind when they undertook such data-gathering exercises, they nonetheless soldiered on, the result being data sets so large it takes thousands of processing cores working in unison to even make a dent in trend analysis.

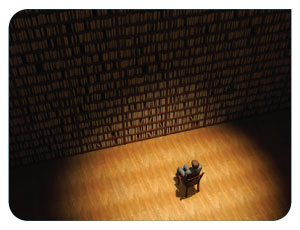

All of the talk about “big data” begs an important question: why does anyone care about gigantic volumes of unstructured, gobbledygook data? The answer, of course, is the notion that massive data sets, if they can be processed and analyzed effectively, can be used to predict the future—anything from an outbreak of influenza to a regional spike in dairy consumption.

Technical stock/commodities traders have long had a handle on this idea. Plot the daily trading activity of a stock on a chart over the course of a few decades, and you’ll see patterns emerge. Find out what the patterns mean, and you’ll be able to predict with reasonable accuracy what a stock will do—maybe not tomorrow or in the next month, but over the course of the next few years.

Recently, I was asked the question, “what does big data have to do with document generation?” Now that’s a tough one, but I’ll take a crack at it anyway. Predictive analytics applied to massive data sets are looking specifically for trends—the purpose being, perhaps, to predict and prevent calamity, to monetize on events that are likely to occur, or to forecast any number of other eventualities for other reasons.

Given that document-generation process applications are highly structured and require specific data entered into a process interview in the correct format, predictive analytic applications would need to reduce data trends to precise data points that could be used in Boolean, mathematical, and other types of expressions. Such expressions could then be used in conditional logic in document models to assemble blocks of text that are customized entirely without human interaction.

Assume, for example, that we live in John Mahoney’s world where cars (and everything else, for that matter) are in constant contact with the internet. Based on the number of cars within a stretch of road coupled with the average rate of speed of the cars, data could be fed into a HotDocs interview that would generate a traffic report that could be sent to mobile devices advising motorists to take an alternate route when necessary (there are probably better ways to handle such a scenario, but you get the idea.)

A more relevant example might involve a government agency (say Caltrans, for example, which manages a multi-billion dollar road-building budget in California). Based on traffic flows over the course of time, predictive analysis could yield precise data points that could guide engineering teams when making estimates and could ultimately be used in the scripting logic of document-generation process applications.

The key, again, is rendering the “big data” set into discreet data items (“answers”) that can be fed into a document-generation process interview.